AI is Overhyped When Will the Bubble Burst?

Table of contents

AI is Overhyped When Will the Bubble Burst?

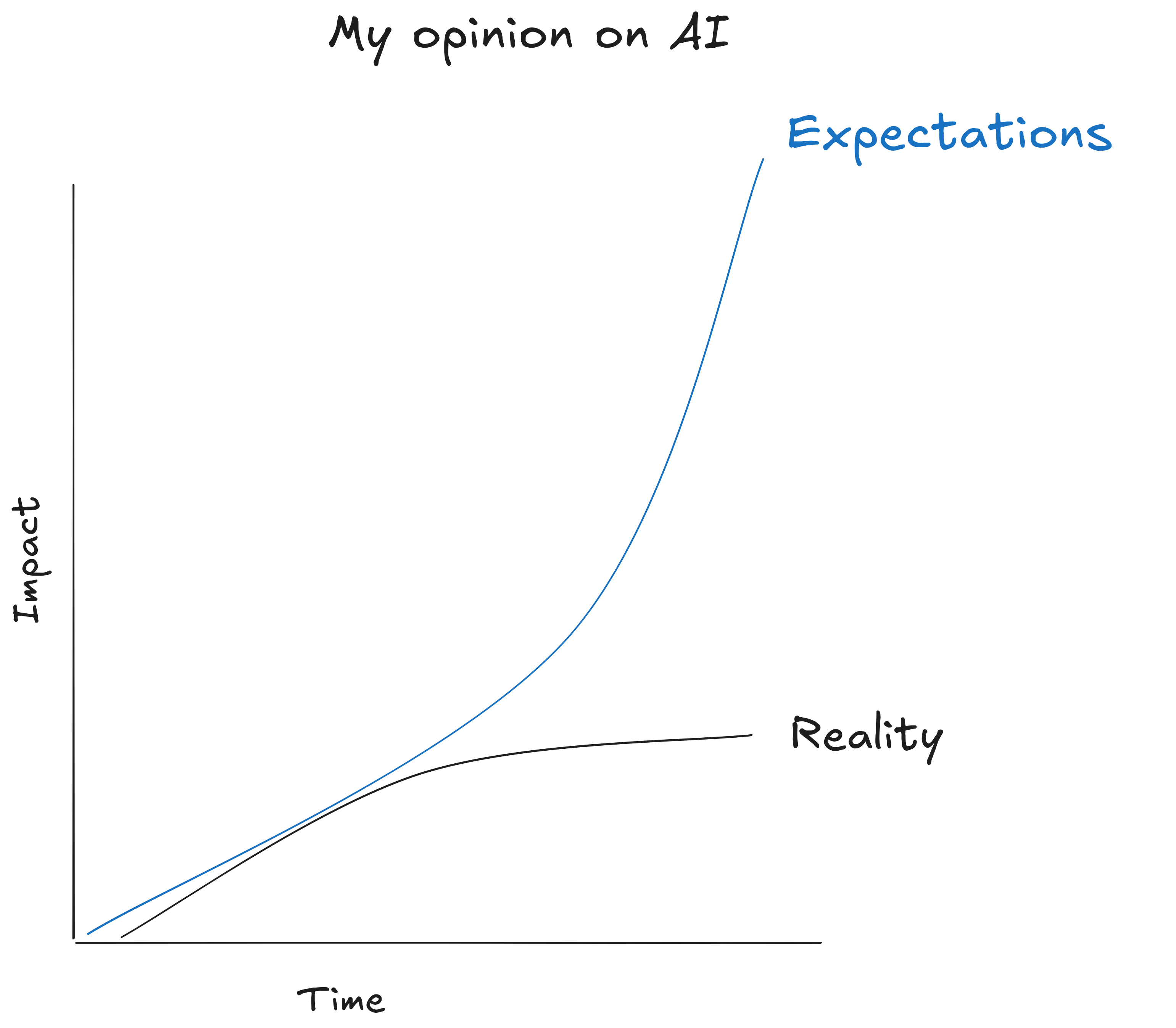

Before you read this post, I want to make something very clear. A bubble doesn’t mean something is useless, fake, or will disappear, it means that its market valuation is way above its real value. Eventually the market will correct itself and its value will drop.

What’s being debated here isn’t whether AI is useful or not—obviously it is, at least for a specific sector of the population (programmers)—what’s being debated is whether it’s inflated or not in the market.

But I believe that while AI has astonishing capabilities, it’s far from revolutionizing the world and achieving the total automation expected by the general public in the short term. The AGI promised to replace all employees is still far away, if it’s even possible. The closest thing we have is a Searle’s Chinese Room .

And I’m not alone in this. Even companies as big as Apple dropped out of the AI race. Their reasons? You can probably find them detailed in their paper The Illusion of Thinking: Understanding the Strengths and Limitations of Reasoning Models via the Lens of Problem Complexity .

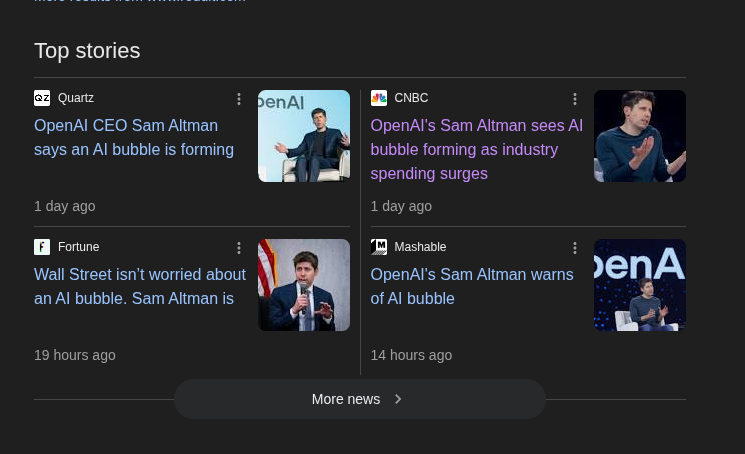

Even Sam Altman admits to seeing an AI bubble , according to the media:

“Are we in a phase where investors as a whole are overexcited about AI? My opinion is yes. Is AI the most important thing to happen in a very long time? My opinion is also yes,”

Many AI companies can’t live up to expectations

Builder.AI, a promising AI that offered website creation through AI, was nothing more than a group of Indian developers working behind the scenes, and just declared bankruptcy . See? There’s no AGI.

This is just more proof that many AI companies are playing cat and mouse with investor money. They try to create expectations to get money from angel investors and whoever else, and then crash spectacularly when they don’t deliver on their promises. But by then, who cares? They’ve already profited and business involves risks, so…

Similarly, Devin AI faded into obscurity. At the same time, we’re seeing the law of diminishing returns with each new LLM that comes out—generative models are reaching that point too.

In fact, some companies have already abandoned the idea of replacing developers and shifted their focus to becoming code creation tools, like Bolt, Lovable or V0 , and others are emerging to position themselves as code assistants for developers: Opencode, Claude Code, Codex, etc.

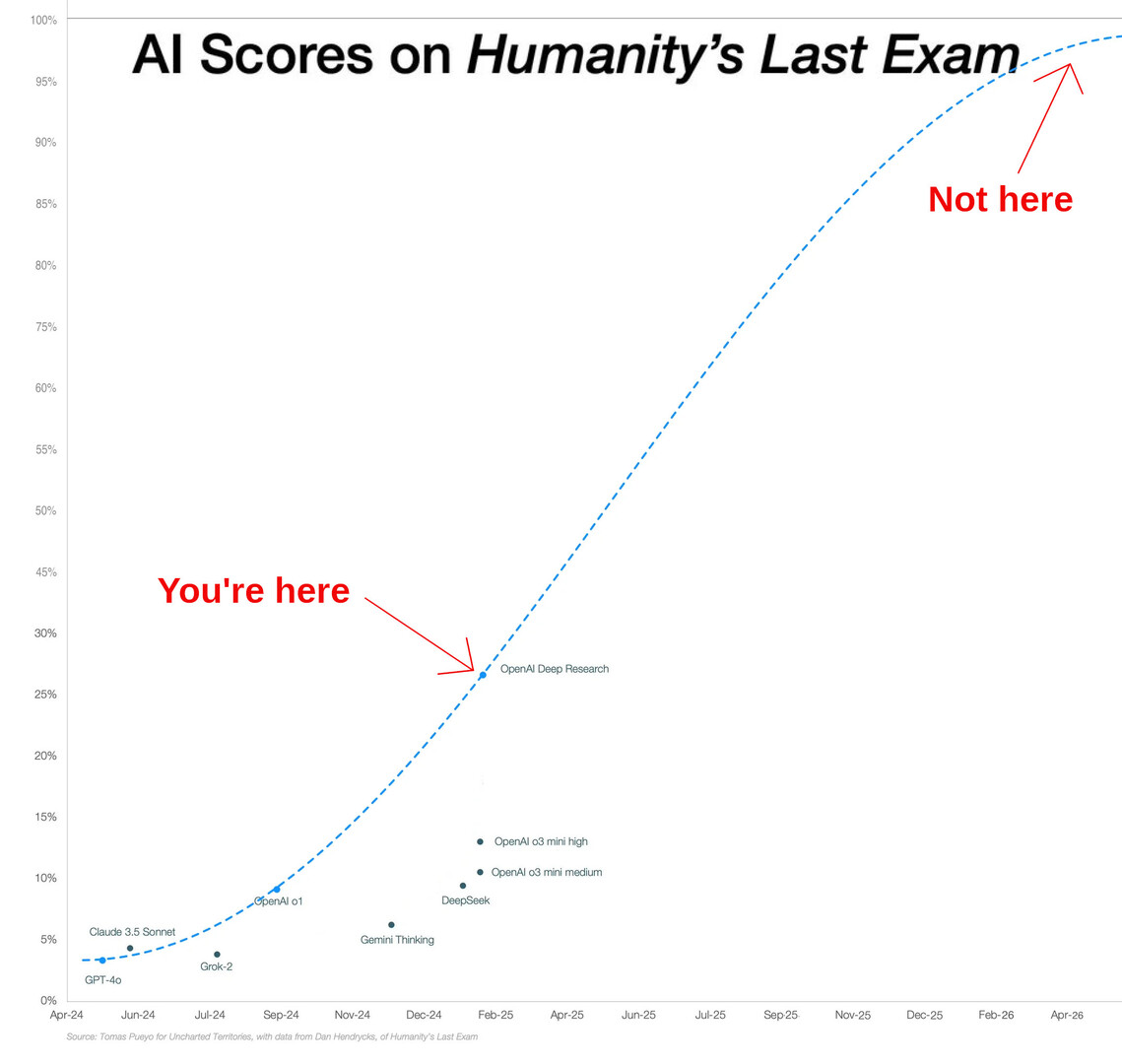

Humanity’s last exam shows we’re still very far from AGI

Let’s consider the scores from Humanity’s last exam. As you can see, we’re at around ~30%, which is frankly a lot—I don’t believe we’ve seen such a significant breakthrough in a long time. Just thinking about the possibilities makes me tremble. But investors and clickbait media are trying to convince you that we’re at around 100%, which is a fallacy of enormous magnitude.

Don’t get me wrong, these scores are impressive, but they’re not the magic bullet that media and investors promote.

The AI bubble is fueled by investor and consumer ignorance

All it takes is for a company to use AI to give it an air of mystery that makes it irresistible to the general public—and to investors. And for a simple reason: as a society, we don’t understand how AI works internally, we don’t understand the underlying statistics, and we attribute it with mystical properties.

Consumers, usually businesses, expect AI to reduce (or eliminate) hiring costs, and investors expect the investment of their lives.

No es que la AI no sea valiosa, lo es, solo que, actualmente, su valor está inflado

The financial frenzy for AI and capital investment

Of course, investors don’t want to be left out of this party and they’re so eager to win the lottery and become the next Zuckerberg of artificial intelligence that they open their wallets without thinking every time they hear the word “AI” in the same sentence as “disruptive.”

But in my opinion, all of this is nothing more than a bunch of companies and entrepreneurs acting accordingly to what came before, as I suspect the creators of Devin, the supposed replacement of programmers did.

The market tries to make a quick buck from the growing and sudden interest of laypeople in the tech world for something as abstract, and so esoteric, as AI.

AI-driven layoffs

You’ve probably witnessed the atmosphere of uncertainty in the tech industry. You’re afraid of losing your job, afraid of being replaced. While it’s true that the bar is higher and code factories are no longer valuable, layoffs aren’t driven by AI but by other factors.

Companies have taken advantage of the circumstances to do AI-washing with layoffs and blame AI for their financial decisions.

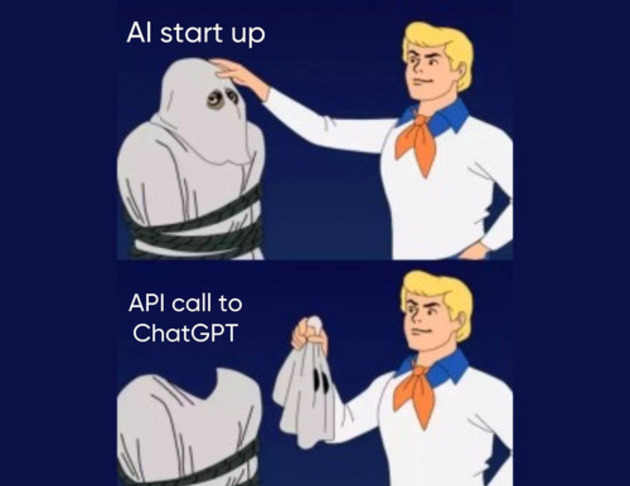

Many AI solutions are just ChatGPT wrappers

On top of that, most people involved in the financial side of AI don’t realize that not much has really changed in the code world. Most solutions just use ChatGPT under the hood, with a pretty interface to present themselves as the next unicorn and seek easy money from investors.

Using ChatGPT for your business isn’t bad in itself, but if an app is just a wrapper of GPT, the risk of becoming a commodity is high. Are we going to have thousands of different apps that solve the same problem and are just ChatGPT wrappers? You can’t have a unicorn that anyone can replicate with a few lines of code.

Even though protocols have been created for interaction with LLMs, like the Model Context Protocol , what’s most common are AI Wrappers.

And it’s not that the above is bad, but I’d rather explore all the possibilities that AI has to offer—that we train neural networks to automate every tedious aspect of society and free humans from the paradigm of performing repetitive 9-5 tasks. Maybe designing better drugs through artificial intelligence . I do want more investment in AI, but that “adds value”—not just seeking quick returns.

Companies are running out of data to train LLMs

There’s also a physical limit to AI development: the amount of data and processing capacity. The second we can solve, but what about the first? AI is only as good as the data used to train it, and this data will become increasingly scarce. Over 50% of online content is now AI-generated . We’re entering the law of diminishing returns. And I’m not the only one who thinks this.

The greed driving AI usage is going to flood the internet with a myriad of AI articles that will be used to feed AI, and it will regurgitate them over and over like in the human centipede movie.

The end of the AI bubble and the new normal

That said, it might seem like I believe all this about AI is smoke and mirrors—but that’s not the case. I believe AI is here to stay and its potential for social revolution, at least in the short term, will be limited to a very small group of companies, among which I include OpenAI, Google, Microsoft, and the usual players.

In my opinion, Artificial Intelligences are the ultimate autocomplete—be it text, images, or video, nothing more. AGI is a milestone that remains distant in the future.

Machines don’t learn. What a typical “learning machine” does is finding a mathematical formula, which, when applied to a collection of inputs (called “training data”), produces the desired outputs. This mathematical formula also generates the correct outputs for most other inputs (distinct from the training data) on the condition that those inputs come from the same or a similar statistical distribution as the one the training data was drawn from. -Andriy Burkov

The financial bubble will burst, the hype will die, and we’ll reach a plateau where people will understand the real potential of AI, as well as its limitations, and it will become just another tool at our disposal. Yes, the internet will change forever at that moment. And finally AI bros will move on to the next trend and stop spreading FOMO on social media.